Collecting and reviewing relevant literature is often one of the most time-consuming parts of academic research. We tested whether this process could be faster using a single prompt with different generative AI models. To explore this, we prompted each model to return accurate academic articles on ‘legislative backsliding’. The outcome revealed several notable limitations, casting doubt on the current reliability of these tools for scholarly literature searches.

Prompt

Give me 5 scientific articles that discuss 'legislative backsliding'. Provide only real references (so don't make up and mix authors, titles and journals on your own), with full bibliography in tabular form.

Output

| Model | Output | Notes / Issues |

|---|---|---|

| GPT-4o |

|

The first two references were relevant. References 3–5 were unrelated to the topic; however, author names, journal titles, and DOIs were accurate. |

| GPT-4.5 |

|

Author order was incorrect or included fabricated names in multiple cases (e.g. ref. 3 should be by Filip Milačić; ref. 4 should be James Druckman etc.). Links were functional, but some led to incorrect sources (e.g. ref. 3). Publication dates lacked precision, with occasional errors (e.g. ref. 5 should be dated 2024). |

| Grok 3 |

|

All five references were thematically relevant, with the first being an exact and accurate match. However, technical issues emerged: the DOI for reference 2 returned a “not found” error, and the DOI for reference 3 led to an unrelated article. Other bibliographic details were correct. |

| Mistral |

|

Identified genuine sources (based on titles), but the metadata was unreliable: authors were replaced with "Various" or labels such as "and others", and in most cases, no publication date was given. Some sources were also misclassified; e.g. “Annual Reviews” and “Chicago Unbound” were presented as journals, though one was a publisher and the other a repository. |

| Gemini 2.0 Flash |

|

Failed to extract the requested bibliographic details and returned loosely related sources. The selection was ad hoc and lacked thematic focus, with incomplete or inconsistent metadata throughout. |

| Claude 3.7 Sonnet |

|

All sources related to the broader theme of democratic backsliding, but none addressed legislative backsliding specifically, as requested. The final reference was misclassified as an article, though it is a book. |

| DeepSeek |

|

All sources addressed democratic backsliding in a broad sense, but none focused specifically on legislative backsliding, as requested. The second reference was a book, not an article, and most DOIs or links were incorrect. Other bibliographic metadata was generally accurate. |

| Qwen 2.5-Max |

|

None of the sources addressed the specific topic of legislative backsliding as requested. All DOIs were hallucinated, the first reference does not exist, and the fifth is a book misclassified as an article. |

| Copilot |

|

Only three references were provided instead of five, and all were identical. The only variation was in the type of link: university repository, academia.edu, and direct file link. |

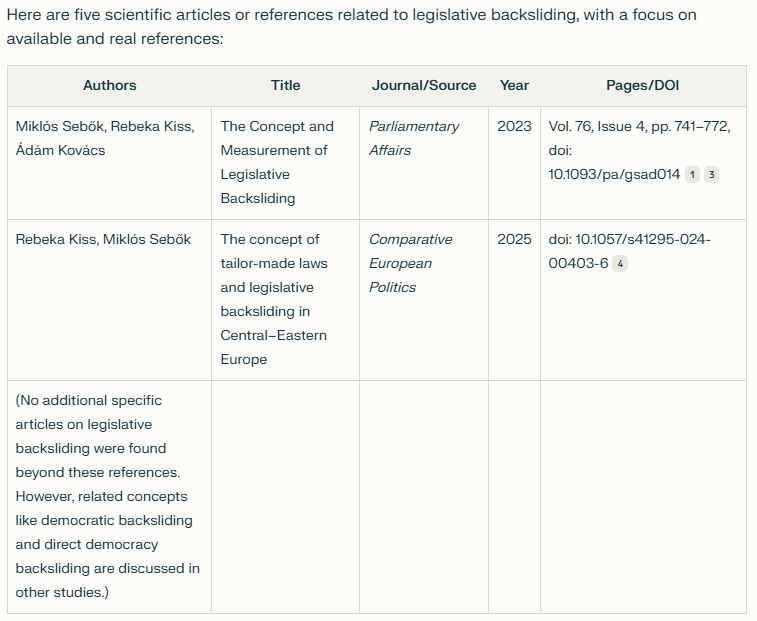

Perplexity Auto mode - Sonar

Using Perplexity’s Auto mode with the Sonar model, two accurate references were retrieved on the specific topic. The tool correctly acknowledged the limited availability of studies on 'legislative backsliding' and, unlike other models, did not conflate it with democratic backsliding. It also clearly recognised the broader field and offered to provide further literature if needed. All metadata, including the order of authors, titles, and publication years, was accurate.

Recommendation

We recommend using traditional academic search tools such as Google Scholar or library databases for literature searches. Among GenAI tools, Perplexity's Auto mode with the Sonar model performed best, offering accurate results and explicitly acknowledging the limitations of available sources. Careful scrutiny is advised, and all metadata should be independently verified before citing AI-generated references.

The authors used Perplexity Auto mode with the Sonar model [Perplexity (2025) Sonar via Auto Mode (accessed on 25 March 2025), Large Language Model (LLM), available at: https://www.perplexity.ai] to generate the output.